How Customer Health Score Automation Turns Monday Morning Data Scrambles into Five-Minute Portfolio Briefings

Weekly account health digests replace hours of spreadsheet stitching with scored, prioritized renewal intelligence for customer success teams.

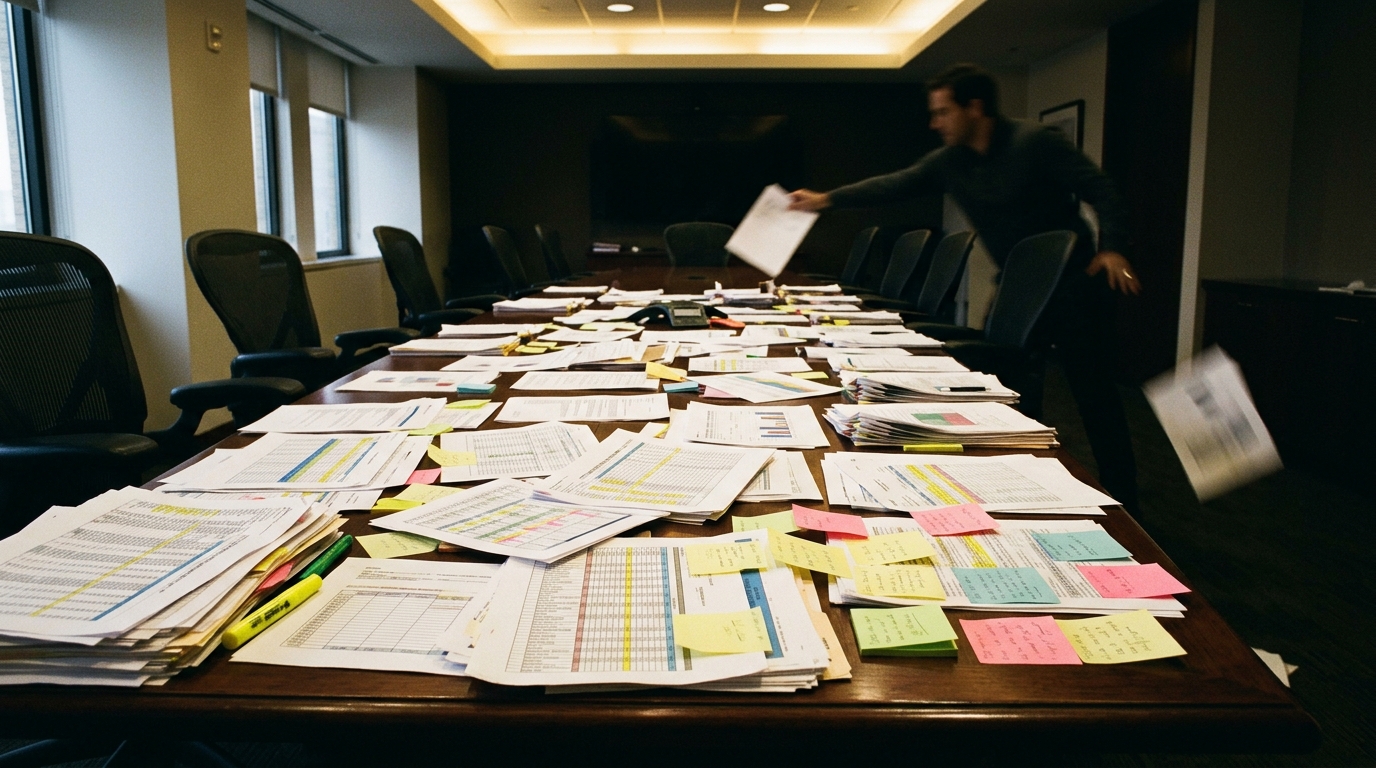

The Monday That Starts with Four Browser Tabs and a Stale Spreadsheet

It is 8:15 on a Monday morning. A Customer Success Manager at a mid-market cybersecurity company has 120 enterprise accounts in the portfolio. The pipeline call is at 10. Somewhere in those 120 accounts, engagement has dropped, support tickets have spiked, and a renewal is quietly approaching. The CSM's job right now is to figure out which accounts need attention before the call starts.

So the routine begins. Pull the usage dashboard in one tab. Export last week's support tickets from the help desk in another. Open the CRM to cross-reference renewal dates. Paste it all into a spreadsheet that was last updated on Thursday.

The spreadsheet has seven columns per account. Engagement score change, support ticket count for the past seven days, usage decline percentage, renewal date, days to renewal, current health status, and a notes column nobody reads. With 120 rows, the CSM is doing mental math on every line. Did that engagement score drop 28% or 35%? Was the ticket spike this week or last week? Is the renewal in 55 days or 85?

The thresholds are in the CSM's head, not in the data. An engagement drop above 30% is bad. More than three support tickets in a week is a flag. Usage decline above 25% means something broke or someone stopped caring. A renewal within 90 days and showing any of those signals is the kind of account that keeps you up at night.

But scrolling 120 rows with those rules held in working memory is a recipe for recency bias. The account that emailed yesterday gets attention. The account that went quiet three weeks ago does not. And quiet is the dangerous one.

Customer success professionals routinely spend hours each week on the wrong accounts. Not because they do not care, but because the manual review process structurally favors noise over signal. The account yelling loudest gets the call. The account declining silently across three metrics gets nothing until the renewal conversation, when it is too late.

Product usage declines by an average of 41% in the quarter before cancellation, giving teams a roughly 90-day warning window (Focus Digital, 2025). That window is generous. But when the person responsible for catching the decline spends Monday morning building a spreadsheet that was already outdated by Friday, the 90 days shrink to almost nothing.

Why Your Portfolio Review Breaks at Eighty Accounts

The core difficulty is not that the data is hard to get. Most teams can pull engagement scores, support ticket counts, and renewal dates from their existing stack. The difficulty is that the judgment call happens at the intersection of three or four metrics, and that intersection is different for every account.

Consider the account where engagement dropped 35%, support tickets climbed to six in a week, and usage declined 28%. Those numbers individually are concerning. Together, with a renewal 104 days out, they form a pattern that demands a specific response: schedule a quarterly business review, audit the support tickets for recurring blockers, assign a technical account manager for hands-on reactivation. But the CSM scanning a spreadsheet might notice the engagement drop, miss the ticket spike, and never connect either to the renewal timeline. The signal is spread across three columns, and the human brain is not a threshold engine running on four concurrent metrics.

Customer health score automation is the practice of continuously evaluating account-level metrics against configurable thresholds and surfacing scored, prioritized risk assessments without manual data assembly. Most customer success professionals struggle to segment their portfolio effectively. That means the majority of CSMs are making prioritization decisions on incomplete mental models, even when the underlying data exists in their systems.

This is where spreadsheet-based tracking collapses. It works for 20 accounts. At 80, the CSM spends more time maintaining the spreadsheet than acting on what it shows. No alerts, no prioritization, no recommended actions. Just rows.

The same structural failure hits a CS Director at a 200-person HR tech company overseeing 400 SMB accounts across three reps. The Director needs a portfolio-level risk view for the VP by Thursday. Building it requires exporting data from three systems, normalizing it into a single format, and eyeballing 400 rows to separate healthy from at-risk. That is a two-day exercise. By the time the summary reaches the VP, two accounts in the at-risk column have already had their renewal conversations without anyone on the team knowing the engagement scores had cratered.

Enterprise platforms like Gainsight and ChurnZero can solve parts of this, but they bring six-figure contracts, month-long implementations, and health scores built on 30 variables that nobody on the team trusts. A CS leader once put it bluntly: "We built a health score with 30 variables. No one on the team trusted it." The tooling exists. The adoption gap is the problem.

And copying account data into a general-purpose chat interface does not scale either. It works for analyzing one account at a time, but it cannot run on a schedule, apply consistent thresholds across 120 accounts, or deliver a prioritized digest to your inbox at the start of the week. You would need to paste 120 records, remember the thresholds, and interpret the output yourself. Every Monday. That is just the spreadsheet with extra steps.

The account that churns is rarely the one that complained the loudest. It is the one that went quiet across three metrics while the renewal clock kept ticking.

lasa.ai builds AI agents for exactly this kind of multi-metric monitoring: pull account data, score it against your thresholds, flag at-risk renewals, and deliver a prioritized weekly digest with recommended actions.

See what this looks like for your portfolio →

What Changes When the Digest Builds Itself

The shift is not from manual to automated. It is from reactive to preemptive. Instead of starting Monday by building a view of the portfolio, the Customer Success Manager starts Monday by reading one.

An AI agent pulls the account data at the start of the week. It applies the same thresholds every time: engagement drop above 30%, more than three support tickets in seven days, usage decline above 25%. It checks every account against the 90-day renewal window. It scores each one as healthy, needs attention, or at-risk. And for the at-risk accounts, it does something the spreadsheet never could: it generates specific, tailored recommendations based on the combination of signals present.

This is not a dashboard you have to go check. It is a delivered briefing.

The distinction matters because the agent follows a defined, auditable process under the hood. Every account gets evaluated against the same criteria. Every threshold is documented. Every recommendation traces back to the specific signals that triggered it. This is what separates an AI agent from a black-box prediction: agent-level outcomes with workflow-level reliability. The process runs the same way every week, and you can see exactly why each account landed where it did.

From Raw Account Data to Prioritized Action in Four Steps

Here is what actually happens when the agent runs.

First, it loads the account portfolio and the configured thresholds. For a Customer Success Manager at a cybersecurity company, that means pulling engagement score changes, support ticket counts for the past seven days, usage decline percentages, renewal dates, and current health status for every account. The thresholds are explicit: engagement drop alert at 0.30, support ticket volume alert at 3, usage decline alert at 0.25, renewal risk window at 90 days.

Second, it filters. Every account is evaluated against those thresholds using deterministic logic, not a prediction model. An account with an engagement score change of -0.35, six support tickets in seven days, and a usage decline of 28% gets flagged immediately. An account with an engagement change of -0.15, one support ticket, and 10% usage growth stays in the healthy column. No judgment required for this step. Just math.

Third, it analyzes the at-risk accounts. This is where the AI reasoning matters. An account with all three signals firing and a renewal 38 days out gets classified as critical priority with recommendations to initiate executive outreach, deep-dive the support tickets, and present a customized value realization plan. An account with the same signals but a renewal 104 days out gets classified as high priority with a different playbook: schedule a quarterly business review, audit the ticket patterns, assign a technical account manager. Same signals, different urgency, different actions.

Fourth, it generates the digest. The output opens with a portfolio summary showing total accounts, healthy count, and at-risk count. Then it lists each at-risk account with its specific risk signals, renewal date, days to renewal, priority level, and three recommended actions. Healthy accounts get their own section with engagement trends and renewal timelines, so nothing falls through. The digest closes with portfolio-wide recommendations grouped by urgency: critical first, then high.

For a VP of Customer Success at a vertical SaaS company serving manufacturing, the signal types shift from cybersecurity engagement metrics to manufacturing-specific usage patterns, but the scored briefing looks the same: account, risk signals, days to renewal, priority, recommended actions. A VP who discovers after a quarterly business review that three at-risk renewals worth $180,000 in ARR were buried in a spreadsheet nobody reviewed in two weeks is exactly the person who needs the digest to arrive before the meeting, not after.

What Tuesday Looks Like When the Agent Runs Monday Morning

The Monday scramble disappears. The CSM reads the digest in five minutes and knows which accounts need calls today, which need a quarterly business review scheduled this week, and which are healthy enough to leave alone until next Monday.

The account with a 35% engagement drop, six support tickets, and a renewal in 104 days has three specific actions attached. The CSM picks up the phone, references the exact signals, and has a conversation that sounds proactive instead of reactive. The account champion hears "we noticed your team's usage dropped 28% last week and you had six support tickets, so we wanted to check in" instead of "hey, just touching base before your renewal." Customers overwhelmingly report that being contacted proactively improves their perception of the business. The difference between those two calls is the difference between a renewal and a churn.

The critical account, the one with a 40% engagement drop, eight support tickets, 35% usage decline, and a renewal in 38 days, gets the executive escalation it needed. Without the digest, that account might have surfaced at the next pipeline call when someone asked, "What's happening with that account?" With the digest, it surfaced Monday morning with a priority label and a playbook.

Teams that automate account health monitoring often extend to adjacent sales operations processes. A deal signal monitoring agent watches target accounts for buying signals and alerts deal owners with recommended next steps. A pipeline stale deal checker flags opportunities that have been sitting in a stage too long. The pattern is the same: take a portfolio of entities, score them against thresholds, surface the ones that need action, and tell the person responsible what to do first.

Whether you cover 120 enterprise cybersecurity accounts, 400 SMB HR tech accounts, or a portfolio of manufacturing clients with $180,000 renewals on the line, the morning changes the same way. You stop building the spreadsheet. You start reading the briefing. And the account that would have churned in Q2 because nobody saw the signals gets a call on Monday instead.

lasa.ai builds AI agents that turn multi-metric portfolio data into weekly prioritized digests with recommended actions. The same pattern applies to customer success, property management, higher education retention, and any domain where entities approach deadlines while declining on multiple signals.

Frequently Asked Questions

How do you identify at-risk accounts before renewal?

What metrics should be included in a customer health score?

How often should you update customer health scores?

What are the early warning signs of customer churn?

How do you automate customer health monitoring?

See What This Looks Like for Your Process

Let's discuss how LasaAI can automate this for your team.