How an AI Agent Turns 250 Resumes into a Scored Shortlist Before Your Coffee Gets Cold

Resume screening automation for recruiting teams that need consistent candidate evaluation at scale, not keyword filtering.

The Afternoon You'll Never Get Back

You're a technical recruiter at a 300-person healthcare network, and you have five open nursing requisitions. Each one pulled between 120 and 180 applicants over the past two weeks. That's somewhere around 750 resumes sitting in your queue, and the hiring managers for three of those reqs sent you the same Slack message this morning: "Where's my shortlist?"

So you start. You pull up the first resume, cross-reference it against the job requirements document, check whether the candidate holds an active RN license (must-have), scan for ICU experience (must-have), look for charge nurse or preceptor background (nice-to-have), note the years of experience, flag a nine-month gap between positions two and three, and make a call. Advance, hold, or pass. That took you six minutes. You have 749 to go.

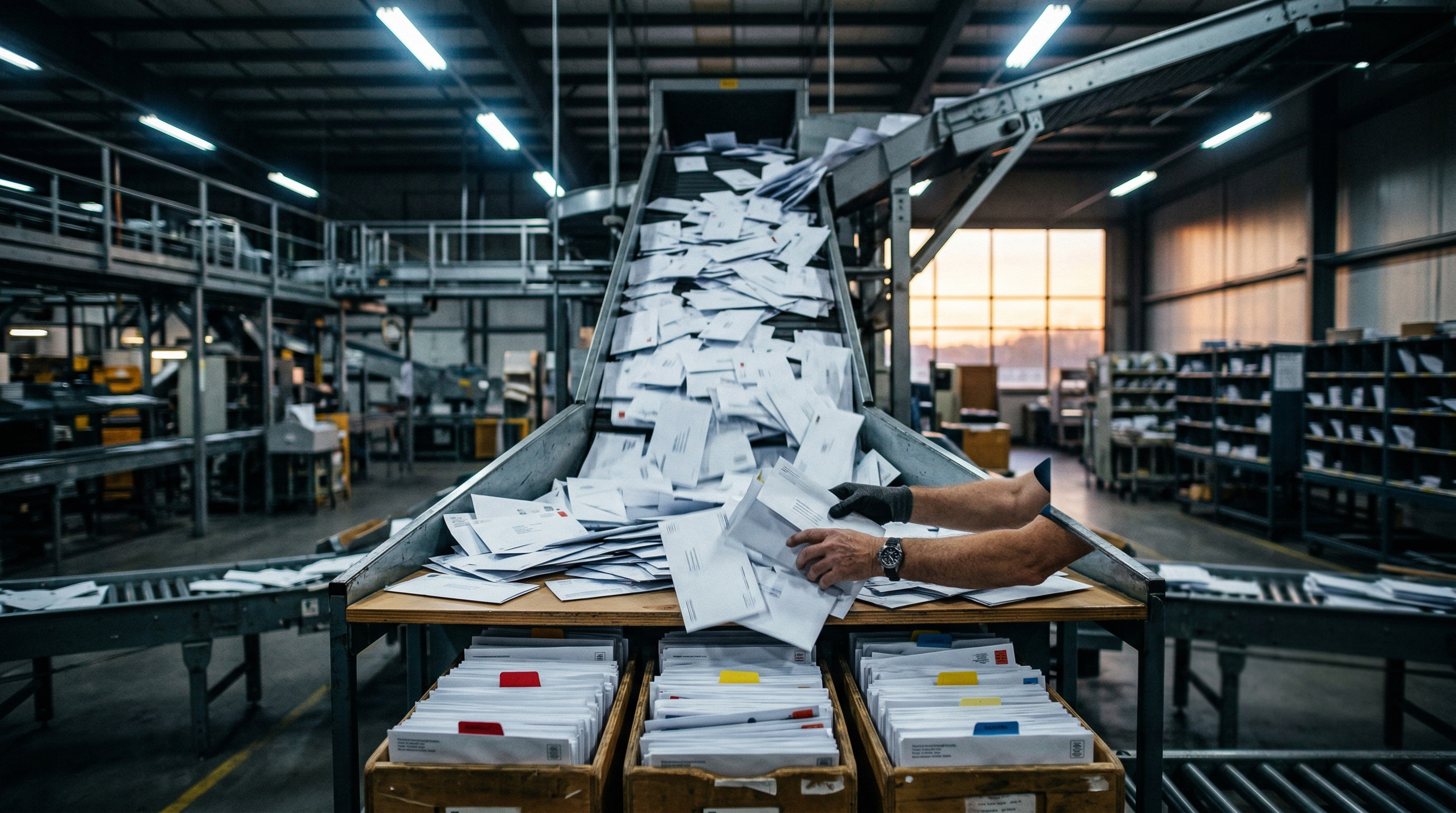

By resume number 25, you're skimming. By resume 40, you can't remember whether the candidate you flagged as "strong maybe" had the required certification or just mentioned it in a cover letter. By the afternoon, you're making faster calls because the pile isn't getting smaller and the hiring manager just pinged again. A recruiter spends approximately 23 hours screening resumes for a single hire (Equip.co, 2026). Across five open reqs, that's 115 hours of screening work. Nearly three full weeks, just to produce five shortlists.

That math alone would be bad enough. But the real damage is quieter.

The Consistency Problem Nobody Measures

The time cost is visible. Everyone sees it. What most recruiting teams never quantify is how inconsistent the screening actually is.

Resume screening consistency, sometimes called inter-rater reliability, is the degree to which different reviewers arrive at the same pass/hold/pass decision for the same candidate. In manual resume screening, inter-rater reliability sits at 60-70%, meaning two recruiters reviewing the same batch will disagree on roughly a third of candidates (Equip.co, 2026). That number gets worse, not better, as the day goes on. Decision quality drops noticeably after the first 20-30 resumes in a session. The must-have criteria that were binary pass/fail at 9 AM become judgment calls by 2 PM.

This isn't a discipline problem. It's a cognitive load problem. When you're evaluating a candidate against must-have skills like Python, AWS, and Kubernetes (pass/fail each), plus nice-to-have skills like system architecture, distributed systems, Go, and Terraform (scored on a gradient), plus flagging employment gaps over 12 months and tenures shorter than 18 months, plus calculating a weighted composite score where must-haves carry 70% and nice-to-haves carry 30%, you're doing structured analytical work that requires sustained attention. The human brain is not built to do that 180 times in a row and produce the same quality of output on attempt 180 as on attempt 1.

The same structural problem shows up anywhere documents get evaluated against rubrics under deadline pressure. A commercial loan analyst at a regional bank receives a business loan package and must cross-reference tax returns, financial statements, and collateral documentation against 12 underwriting criteria. Mandatory ratios get checked as pass/fail, desirable factors like existing deposit relationships get scored on a gradient, and concerns like declining quarterly revenue get flagged. That manual review consumes 3-5 business days of initial processing per application (National Funding, 2025). The vocabulary changes from "must-have skills" to "mandatory coverage ratios," but the failure mode is identical: reviewer fatigue degrades evaluation quality before the stack is finished.

Here's what makes this particularly hard to solve with simple automation. Your ATS can filter on keyword presence, but a candidate who lists "Python" in a skills section gets the same treatment as one who led a Python migration that reduced latency by 30%. 88% of employers report that qualified candidates get filtered out because keyword-based systems can't distinguish between mention and mastery (Harvard Business School / DAVRON, 2025). Spreadsheet rubrics work for 15 applicants. At 150, they become a data entry marathon where multiple reviewers overwrite each other's scores and nobody goes back to audit whether the rubric was actually followed. And copying resumes into a chat window one at a time gives you a rough evaluation, but you can't schedule it, audit it, share it with a team, or connect it to your applicant tracking system.

The gap isn't between manual and automated. It's between evaluation that degrades with volume and evaluation that doesn't.

This is the problem lasa.ai solves: an AI agent that scores every resume against your weighted criteria with the same rigor on candidate 200 as on candidate 1.

See what this looks like for your hiring process →

What Changes When Every Resume Gets the Same Evaluation

The answer isn't faster screening. Faster screening with the same inconsistency just produces bad shortlists sooner.

The answer is structured evaluation that holds up at volume. Every resume gets parsed against the same weighted criteria. Must-have skills are checked as pass/fail. Nice-to-have skills are scored on a 0-100 gradient. Employment gaps and short tenures are flagged against configurable thresholds (not the recruiter's mood at 3 PM). A composite fit score determines the recommended action: advance to phone screen, hold for further review, or pass. The recruiter reviews a scored shortlist with flagged concerns and a recommended next step for each candidate, not a raw stack of 200 resumes.

The agent delivers outcomes. But under the hood, it follows a defined, auditable process. Every evaluation uses the same criteria in the same order with the same weights. If a hiring manager asks why a candidate scored 77 out of 100, the answer is traceable: must-haves all passed, nice-to-have scores were 100 for system architecture and 0 for distributed systems, Go, and Terraform, and a concern was flagged because total experience fell below the eight-year minimum. Agent-level outcomes with workflow-level reliability.

From Inbox to Scored Shortlist in Four Steps

Here's what actually happens when a resume arrives for a Staff Software Engineer requisition at a mid-size healthcare technology company.

The agent reads everything at once. It ingests the candidate's resume alongside three reference documents: the job requirements (must-have skills, nice-to-have skills, minimum experience, education requirements, and weighted score distribution), the alert thresholds (maximum employment gap of 12 months, minimum tenure of 18 months), and the requisition metadata (job title, department, target location, visa sponsorship requirements). A recruiter doing this manually toggles between four browser tabs. The agent holds all four documents in context simultaneously.

It extracts a structured candidate profile. From an unstructured resume, the agent pulls contact information, a skills inventory, a chronological work history with employer names, role titles, dates, and key achievements, plus education details. This isn't keyword extraction. It's reading comprehension. When a resume says "Led migration of core services to a major cloud provider, reducing latency by 30%," the agent understands that as cloud infrastructure experience with measurable impact, not just a keyword match on a provider name.

It scores against the criteria. Must-have skills (Python, AWS, Kubernetes for this req) get evaluated as pass or fail. Nice-to-have skills (system architecture, distributed systems, Go, Terraform) get scored on a 0-100 scale based on depth of evidence in the resume. The agent flags concerns: an employment gap exceeding the 12-month threshold, a tenure shorter than 18 months, total experience below the minimum. It calculates a weighted composite score, 70% weight on must-haves and 30% on nice-to-haves, producing a single fit score between 0 and 100.

It recommends a next action. A fit score of 70 or above means advance to phone screen. Between 40 and 69, hold for further review. Below 40, pass. This routing is deterministic, not a suggestion the agent improvises. The thresholds are configurable. If your team decides the cutoff should be 65 instead of 70, you change the number. The logic doesn't drift.

For a procurement analyst at a mid-market enterprise evaluating vendor proposals against an RFP, the data shape changes but the pattern holds. Must-have skills become mandatory qualifications (SOC 2 certification, HIPAA compliance, integration compatibility) checked as pass/fail. Nice-to-have skills become weighted scoring factors (pricing competitiveness, technical approach, reference quality) scored on a gradient. The employment gap flag becomes a financial stability flag. The scored shortlist becomes a ranked vendor evaluation with concern annotations. The procurement analyst reviews a stack-ranked list with flagged risks, not 12 unscored proposals.

What Lands on Your Desk

The output is a candidate scoring report. Not a summary paragraph. Not a thumbs-up or thumbs-down. A structured document the recruiter and hiring manager can both read and act on.

It opens with a candidate profile section: name, contact information, location, a professional summary, and a complete skills inventory. Below that, the work history is laid out chronologically with role titles, employers, dates, and key achievements pulled from the resume. This section alone saves the hiring manager from having to open the original resume. They can see the career arc at a glance.

The scoring section breaks down must-have evaluations (Python: pass, AWS: pass, Kubernetes: pass) and nice-to-have scores (system architecture: 100, distributed systems: 0, Go: 0, Terraform: 0). The composite fit score sits at the center of the report, 77 out of 100 in this case. Below it, flagged concerns are listed with specifics: "Candidate total experience is 6 years, which is below the minimum requirement of 8 years." Not a vague caution. A specific gap tied to a specific threshold.

The recommended action sits at the bottom. For a 77, that's "phone_screen" with a justification: candidate meets must-have requirements and scores well overall. The recruiter can agree, override, or escalate, but they're starting from a reasoned recommendation with supporting evidence, not a gut feeling formed at 4 PM on a Tuesday.

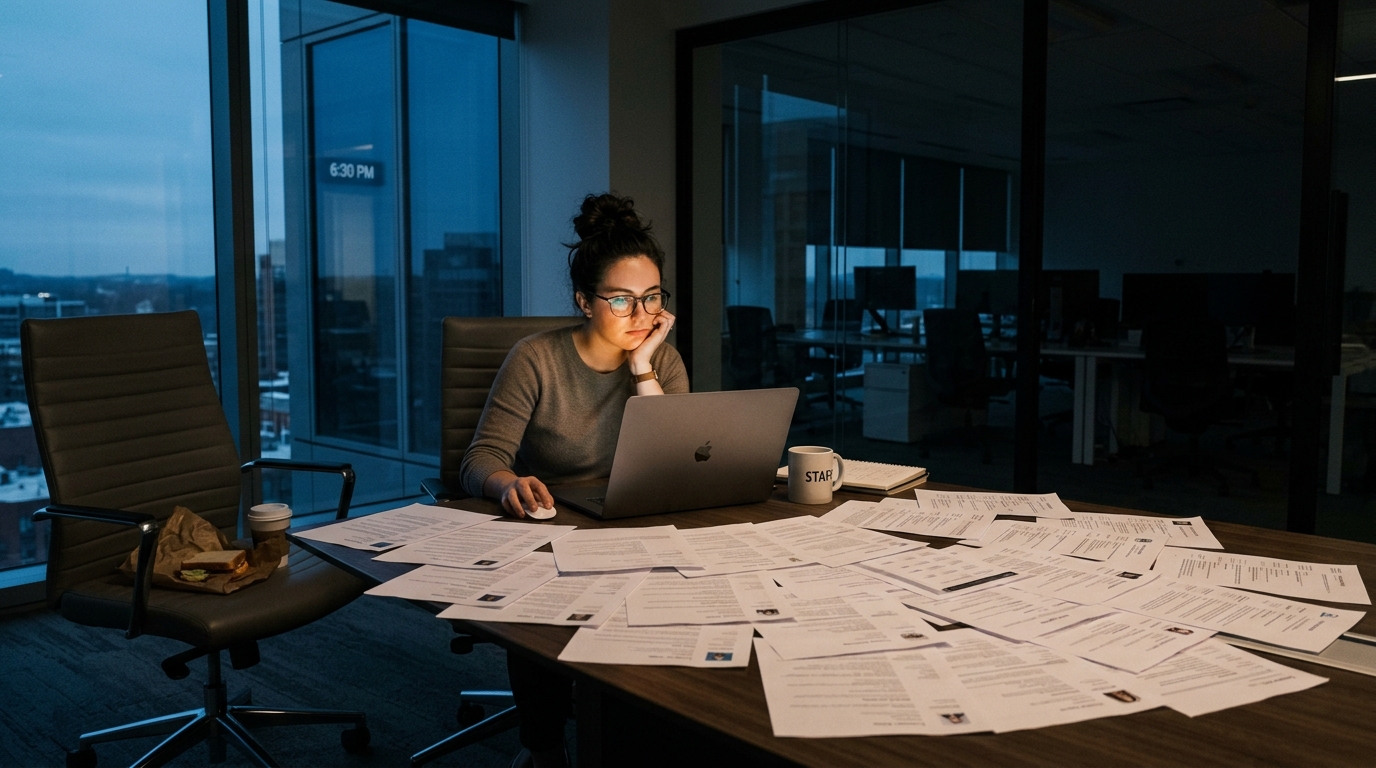

What Tuesday Looks Like When the Agent Runs on Monday

Think about the recruiting coordinator at a growing fintech startup that just closed a Series B. Application volume doubled overnight. They have 12 engineering positions to fill this quarter, and every req is pulling 150-200 applicants. Before, that meant triaging something like 2,000 resumes across 12 requisitions. The coordinator was spending entire days just trying to keep up with the intake, and hiring managers were still getting shortlists late.

Now the intake lands and scores itself. The coordinator reviews scored shortlists instead of raw resume stacks. The phone-screen candidates are already identified. The holds have flagged concerns that make the "maybe" pile actionable instead of infinite. The passes have a clear reason attached. The coordinator spends their morning discussing the shortlist with the hiring manager instead of building it.

The hiring manager gets what they actually wanted: a shortlist with reasoning. Not "here are my top five" with no explanation, but "here are the candidates who passed all must-haves and scored above 70 overall, and here's the one at 77 who's strong on cloud infrastructure but two years short on total experience. Worth a conversation?"

Teams that automate resume screening often extend the pattern to adjacent recruiting processes. The same structured evaluation that scores candidates against job requirements can compile interview preparation packets, matching each interviewer's focus area with tailored questions based on the candidate's profile and screening notes. The pattern scales because the work is the same: parse documents, evaluate against criteria, produce a structured output with a recommendation.

Whether you're screening 120 nursing candidates across five reqs at a regional healthcare network, evaluating plant engineer applicants at a mid-size manufacturer where safety certifications are non-negotiable but buried in different resume formats, or triaging 200 engineering resumes at a fintech that just announced a funding round, the morning changes the same way. You stop reading resumes. You start reading scored evaluations. The decision quality goes up because consistency went up, and the time came back because the agent doesn't need 23 hours per hire.

That time was never supposed to be spent on screening anyway.

lasa.ai builds AI agents that handle document-to-disposition evaluation, whether the documents are resumes, loan packages, vendor proposals, or insurance submissions. The pattern is the same. The vocabulary changes.

If your team is spending days on work that should take minutes:

See what an agent looks like for your process →Frequently Asked Questions

How long does it take to screen a resume manually?

What are the limitations of ATS resume screening?

How do you create a candidate scoring rubric that stays consistent?

Can AI replace recruiters in resume screening?

Why does my ATS keep filtering out qualified people?

See What This Looks Like for Your Process

Let's discuss how LasaAI can automate this for your team.